The Architecture of Modern Insights: Navigating the Time Series Landscape

The digital transformation of global industries has generated an unprecedented volume of data that is fundamentally defined by the moment it was created. From tracking the heartbeat of a power grid to monitoring the volatility of decentralized finance, organizations require specialized infrastructure to maintain operational clarity. Many technical leaders now rely on db engines tsdb to manage these high-velocity streams, ensuring that data is not only stored securely but is also instantly retrievable for real-time analysis. These engines are specifically engineered to handle the relentless "write-heavy" nature of modern telemetry, providing a stable foundation for the next generation of industrial intelligence.

The Structural Logic of Temporal Data

Traditional relational databases were designed for transactional integrity—think of a bank transfer where one record is updated. In contrast, time series databases are optimized for a continuous "append-only" flow. Because updates to historical data are rare, these systems can use highly efficient storage formats that prioritize speed and massive ingestion rates. By treating time as a primary index, the database can organize data points chronologically on the physical disk, which minimizes the mechanical effort required to find specific patterns across long durations.

This chronological organization is what allows for "downsampling"—a process where high-resolution data (like per-second readings) is summarized into lower-resolution aggregates (like hourly averages) for long-term storage. This ensures that while the most recent data is available in granular detail, the database remains lean and performant even as it accumulates years of historical context.

Efficiency Through Advanced Encoding

One of the most significant advantages of a dedicated temporal engine is its ability to compress information. Because sensor data often changes incrementally—such as a temperature reading moving from 20.1 to 20.2—specialized algorithms can store only the "delta" or the difference between points rather than the full value every time. This approach, combined with sophisticated timestamp encoding, allows companies to store billions of data points in a fraction of the space required by standard flat files.

Lowering the storage footprint does more than just save on hardware costs; it directly impacts query performance. When the database needs to scan a year's worth of data, having that data compressed means fewer bytes are moved from the disk to the memory, resulting in dashboards that load in seconds rather than minutes. This efficiency is critical for mission-critical monitoring where every second of delay can have real-world consequences.

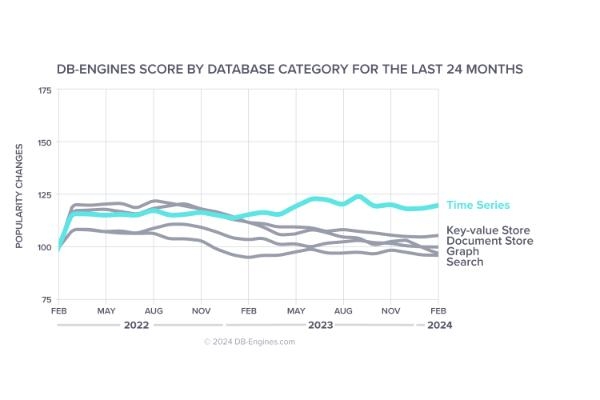

Decoding the time series database ranking

Choosing the right technology requires an understanding of how different systems perform under the stress of production workloads. When we look at the time series database ranking, the systems that consistently rise to the top are those that offer a balance of high ingestion throughput and low-latency query response. The market is currently moving away from monolithic, single-node architectures toward distributed systems that can scale horizontally across multiple data centers, providing the resilience needed for global operations.

Furthermore, the ranking reflects the growing importance of "developer experience." Platforms that support standard query languages like SQL are gaining traction because they allow teams to leverage existing skills without learning a proprietary syntax. The ability to integrate seamlessly with visualization tools and machine learning frameworks has become a primary differentiator for the leaders in this space.

Industrial Intelligence and Predictive Maintenance

In the industrial sector, the application of this technology has moved beyond simple monitoring into the realm of predictive maintenance. By capturing every vibration and thermal signature of a machine, a TSDB creates a detailed historical record that can be used to train AI models. These models can then identify the subtle precursors to equipment failure, allowing maintenance teams to intervene before a breakdown occurs, saving millions in potential downtime.

This "digital twin" approach—where a physical asset has a perfectly synchronized digital counterpart—is only possible because of the high fidelity of time series data. Whether it is a fleet of delivery vehicles or a complex chemical refinery, the ability to see the "state" of the system at any exact microsecond in the past provides a level of transparency that was previously impossible to achieve.

Streamlining Analytics at Scale

Modern data strategies prioritize "moving the logic to the data" rather than moving the data to the logic. High-performance storage engines allow developers to run complex statistical functions—such as moving averages, percentiles, and standard deviations—directly within the database layer. This reduces network congestion and ensures that the application layer receives only the final, processed insights it needs to display to the user.

For organizations managing massive datasets, this internal processing capability is a game-changer. It allows for the creation of sophisticated alerting systems that can detect anomalies as they happen. For example, a system could be configured to trigger an alert only if the average pressure over the last ten minutes exceeds a certain threshold, effectively filtering out "noise" and preventing alert fatigue for operators.

The Technical Depth of influxdb tsdb analyze

As systems scale, engineers must perform deep-dive sessions to influxdb tsdb analyze how resource consumption correlates with data complexity. One of the most common challenges is "cardinality," which refers to the number of unique identifiers—like serial numbers or IP addresses—the database is tracking. Managing high cardinality without a drop in performance is a hallmark of an enterprise-grade solution, requiring sophisticated indexing strategies that don't overwhelm the system's memory.

Analytical deep-dives often focus on optimizing the "read path." By using columnar storage, the database can bypass irrelevant metrics during a query. If an analyst only needs to look at the energy consumption of a building, the system doesn't need to touch the data related to temperature or humidity, even if they were recorded at the exact same time. This selective reading is what enables massive datasets to remain interactive and useful for daily decision-making.

The Intersection of Edge Computing and Connectivity

The future of data management is increasingly decentralized. With the rollout of 5G and the proliferation of powerful edge devices, more data is being processed at the source. This creates a need for databases that can run in resource-constrained environments while remaining part of a larger, unified cluster. These "edge-to-cloud" architectures allow for local autonomy—ensuring a factory can keep running even if the internet goes down—while still providing a centralized view for global management.

Security also plays a vital role in this connected ecosystem. Modern platforms are integrating end-to-end encryption and robust identity management to ensure that as data moves from a remote sensor to a central dashboard, it remains protected. As data becomes the most valuable asset an organization owns, the infrastructure that houses it must be built with a "security-first" mindset.

Building a Future-Proof Foundation

Selecting a database is a long-term decision that influences the agility of an entire organization. By opting for a system designed specifically for the unique properties of temporal data, companies can avoid the performance bottlenecks and "technical debt" associated with older, more rigid database models. The flexibility to handle diverse data types, combined with the power to scale on demand, ensures that the infrastructure can grow alongside the business.

Ultimately, the goal is to transform raw, time-stamped numbers into a strategic advantage. Those who can efficiently capture, store, and analyze the flow of time will be best positioned to innovate in an increasingly fast-paced world. The transition to a dedicated time series architecture is not just a technical upgrade; it is the first step toward becoming a truly predictive, data-driven enterprise.

- Sports

- Art

- Causes

- Crafts

- Dance

- Drinks

- Film

- Fitness

- Food

- Games

- Gardening

- Health

- Home

- Literature

- Music

- Networking

- Other

- Party

- Shopping

- Theater

- Wellness